Title

SYN7

Keywords

Artificial Intelligence, Emotion, Colour (theory), Light, Sound, Facial Analysis, Computer vision, Synesthesia

What?

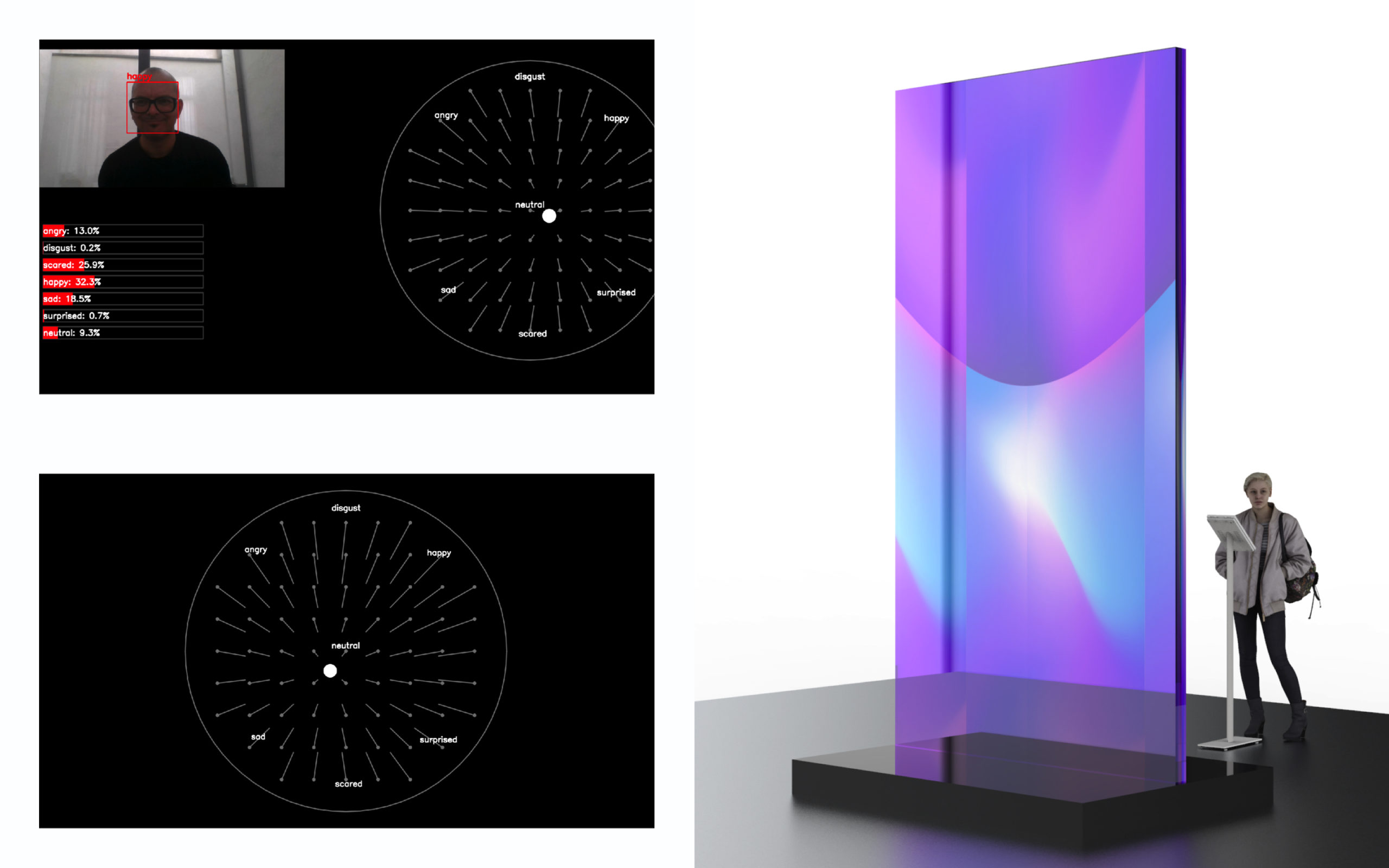

We present a new work-in-progress exploring the possibilities and existing methods to analyze emotion into sound, light, colour and physiological signals. SYN7 refers to the seven universal human emotions, SYN to Synesthesia.

What do we see?

We see a transparant large sheet of plexiglass (or glass) illuminated with light (LED, build in the frame that contains the plexi or glass. People can stand in front of a little camera and their facial expressions (analysed by an AI) generate sound and light compositions.

How does it work?

A neural network is trained to analyse different facial expression. The face of a user becomes an ‘instrument’ that triggers/generates sound and light in a responsive way.