HALzheimer _ WATCHING AN AI SLOWLY FORGET

The exhibition title is a contraction of two words Alzheimer and HAL. HAL9000 (Heuristic-Programmed Algorithmic Computer) is a fictional computer built by Artificial Intelligence, based on the eponymous movie villain from the Space Odyssey series created by Arthur C. Clarke (1917-2008). HAL9000 can talk like a human, recognise faces, read lips, interpret emotions and play chess. In the series he is depicted as a camera that looks like a red eye. HAL9000 metaphorically develops Alzheimer’s while slowly being taken down by removing its memory.

Man and machine are not very different.

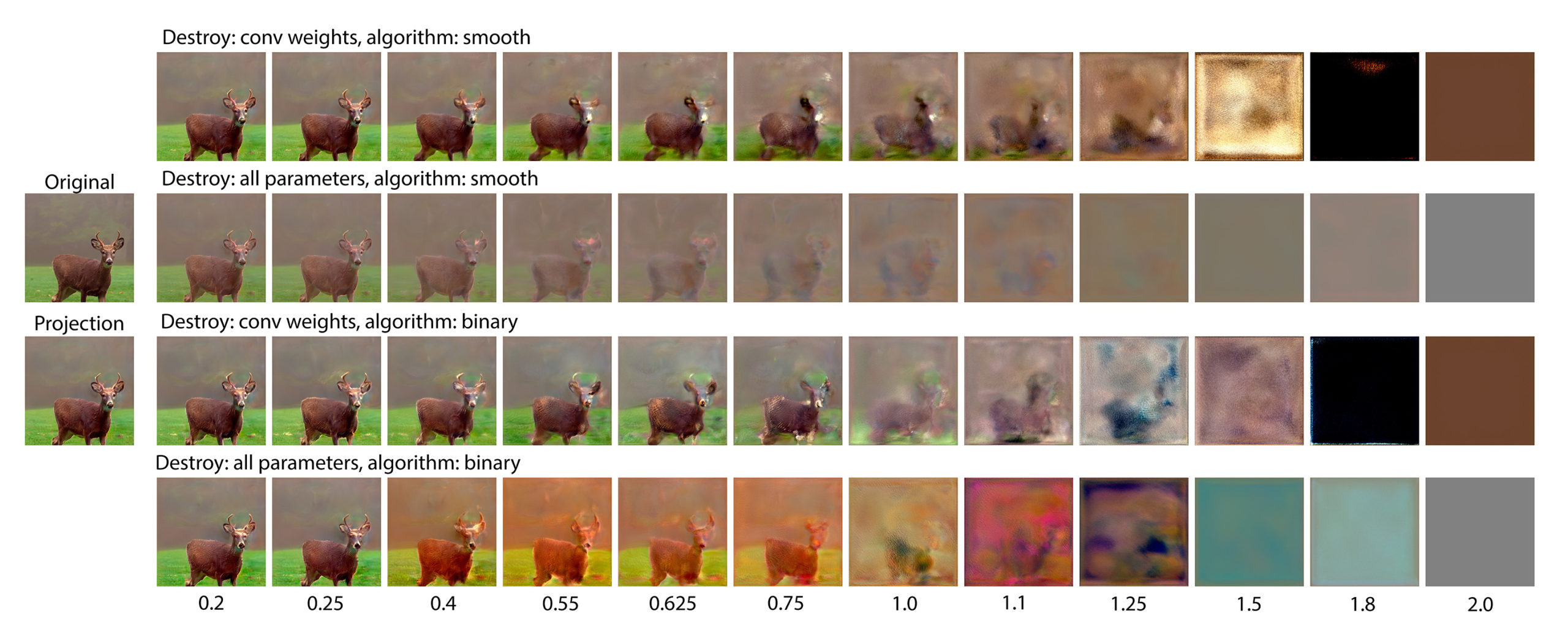

By using this process and then reversing it and destroying weights, artist Frederik De Wilde creates a spooky experiment with Machine Learning. The custom software produced by Studio De Wilde uses custom algorithms to create projected and synthetic images, whereafter the same network gradually forgets what the image looks like. Neural networks are still mysterious, both in a human brain and certainly in technology.

Frederik De Wilde constructs an artistic context around this mystery both a light and uncanny way. At first, the algorithmic face in the artwork seems to age quickly, lines appear under the eyes and at the edges of the face, the hair thins and gets lighter. Afterwards, the colors begin to mix together, and the features of the face begin to ‘melt’. This effect can be compared to the evolution of the color schemes of the twentieth-century impressionist Claude Monet; the older he got, the more he started to use green and yellow. Our eyes and brain, and the networks that interconnect them, undergo changes that we rarely notice while they are happening.

HALzheimer accelerates this process and applies it to contemporary technology. AI tries to construct a reality in the same way a human brain does. How much do man and machine still differ? The collaboration between Arteconomy and Frederik De Wilde offers a unique opportunity to use technology to investigate how the brain deteriorates, and what the (visual) consequences are.

- John McCarthy (1927-2011), American computer scientist and founder of the term Artificial Intelligence (AI), described the term as follows: “The science and engineering of making intelligent machines, especially intelligent computer programs.” Artificial (made by people) Intelligence (the power of thinking) is the study of machines that can feel, make decisions and act like humans. They are able to acquire knowledge and skills, to understand changing situations and know how to deal with them.

- Machine Learning is an efficient way to give an algorithm the necessary tools to learn something. An example of Machine Learning is Facial Recognition. Here, faces are assembled by Generative Adversarial Networks (GANs), a type of neural network that learns from existing photos to produce, for instance, new realistic yet purely fictional faces. The interconnected neurons of the technological network determine the features: the eye color, skin color, shapes, hair color… in the same way that a human brain uses a network of neurons to construct a mental image of a face.